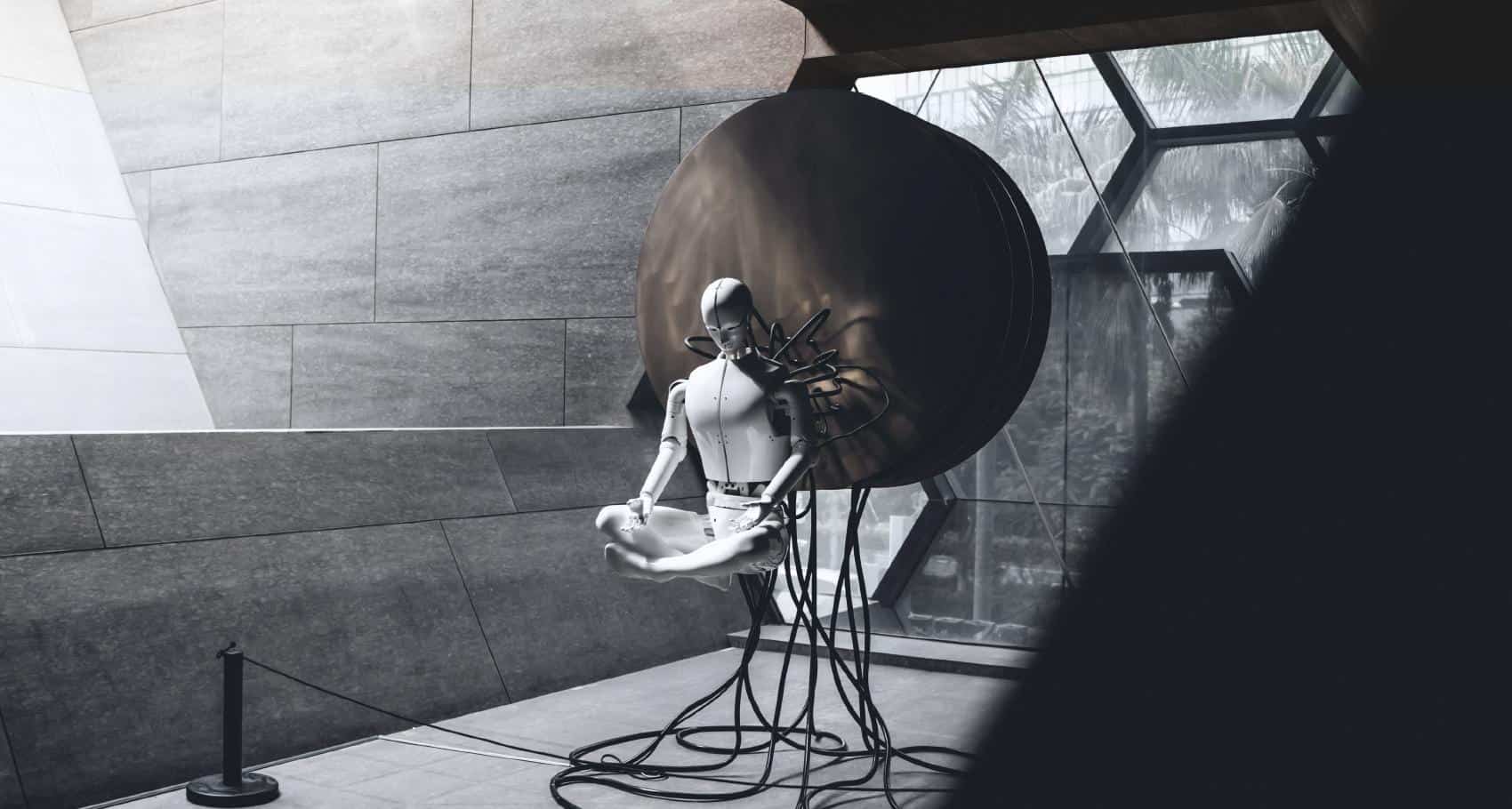

PyThia: trusted AI

for your

organization

HOW WE HELP >

PyThia: TRUSTED AI FOR YOUR ORGANIZATION

At code4thought ® we deeply want to help society addressing the challenges and injustices imposed by automated decision making technology.

Since the adoption of AI/ML models is increasing, so does the criticality and gravity of their decisions. For that reason, Code4Thought is developing the PyThia technology that will ensure AI/ML systems are Fair, Accountable and Explainable.

The purpose of this technology is to be guided by humans, adhering to the Human-In-The-Loop principle and has the following characteristics:

It is cross-platform and model-agnostic

It can be reusable in the long run and not just solving ad hoc problems

It is sustainable as it is merely based on open and vendor-agnostic technologies and components.

AI ADVISORY

The authority once based on human intuition and reflection is gradually being given to algorithms. The gravity of decisions made by automated algorithmic systems is increasing. As we see examples daily, algorithms gone awry cause serious consequences for business and humans. For some areas, errors can be particularly dire:

Cyber-Security & Privacy

Image and Facial Recognition (in particular)

Financial Services, M&A and Due Diligence

Autonomous Decision-Making with Social Impact | Public Sector

Algorithm design and auditing even in the hands of wicked smart coders, with little to no experience in 1. Designing a bias-free system and 2. Auditing to check for gaps, does more harm than good. We need humans in the loop to ensure algorithms are as bias-free as possible. And those humans must have deep experience in auditing software systems via ML tooling and guided by humans deeply experienced in the process. Our team is ready to help and advise as to what are the best-practices for setting up the proper processes and infrastructure that will ensure your AI is Responsible and can be Trusted.

OUR CLIENTS

*in partnership with SIG